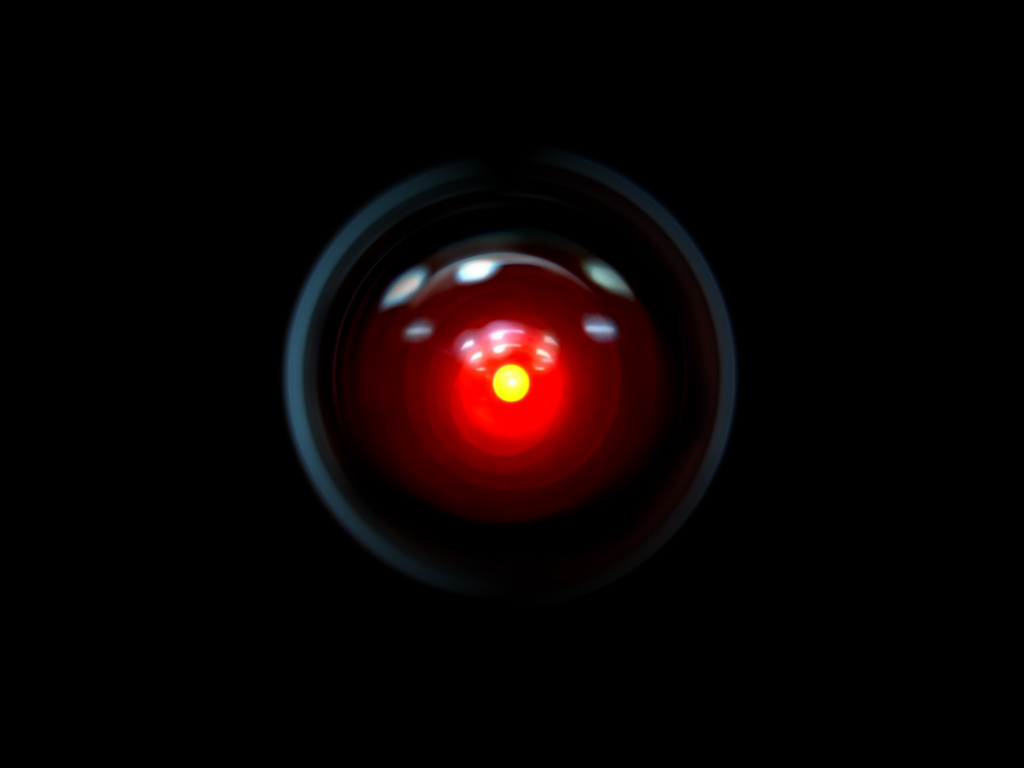

We basically stopped improving nuclear reactors decades ago by adding layers of regulations. This being said, you can stall technological progress. If it does, we might end up facing a choice between banning computing entirely or accepting powerful new artificial-intelligence software. However, it seems possible that the cost of training and running generative artificial-intelligence models might fall in the coming years. But to stop Americans from doing artificial-intelligence research? When we tried to ban gain-of-function research on viruses, it moved to China.Ĭurrently, only large corporations and governments can produce something akin to ChatGPT. If all you want to do is keep advanced AI out of the hands of some countries like North Korea, that can be arranged by cutting them off from the Internet and preventing them from importing computer equipment. We never tried to ban nuclear weapons, we only limited them to powerful countries. You might object that we successfully stopped the proliferation of nuclear weapons… Except that it is a bad analogy: what is suggested is an entire worldwide ban. Going nuclear and banning advanced AI research definitively is flat out unfeasible: it will almost certainly require going to war. In some sense, it is Frankenstein himself that is the cause of the tragedy, not his monster. A case can be made that the monster is not the danger itself, but rather how human beings do it. I grew up watching Lost in Space (1965) where an intelligent robot commonly causes harm. Six months won’t cut it for a new philosophical breakthrough. Shelley’s Frankenstein was published in 1818. Philosophers and computer scientists have spent the last fifty years doing research on artificial-intelligence safety, do you think that we are due for a conceptual breakthrough in the next six months? We had rogue but helpful artificial intelligence in mainstream culture for a long, long time: HAL 9000 in 2001: A Space Odyssey (1968), Mike in The Moon is a Harsh Mistress (1966). Do we seriously think that the NSA and other major governments are going to obey we pause?Ĭovid also showed that what starts as a short pause can be renewed again and again, until it becomes unsustainable. Except that as the partygate scandal showed, high ranking politicians were happy to sidestep the rules. We did lock down nearly the entire world to suppress covid. The six-month pause is effectively unenforceable. Many leading researchers and pundits are proposing a six-month ‘pause’, during which time we could not train more advanced intelligences. Some intellectuals are proposing we create a worldwide ban on advanced AI research, using military operations if needed. Generative artificial intelligence, and in particular ChatGPT, has taken the world by storm. nonnull on Hotspot performance engineering fails.Egon on Hotspot performance engineering fails.Lee on Hotspot performance engineering fails.Abutalib Aghayev on Hotspot performance engineering fails.Daniel Lemire on Hotspot performance engineering fails.Defining interfaces in C++: concepts versus inheritance.Will emerging artificial intelligence boost research productivity?.Science and Technology links (April 22 2023).However, you can you can sponsor my open-source work on GitHub.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed